OpenAI’s updated image generator can now pull information from the web

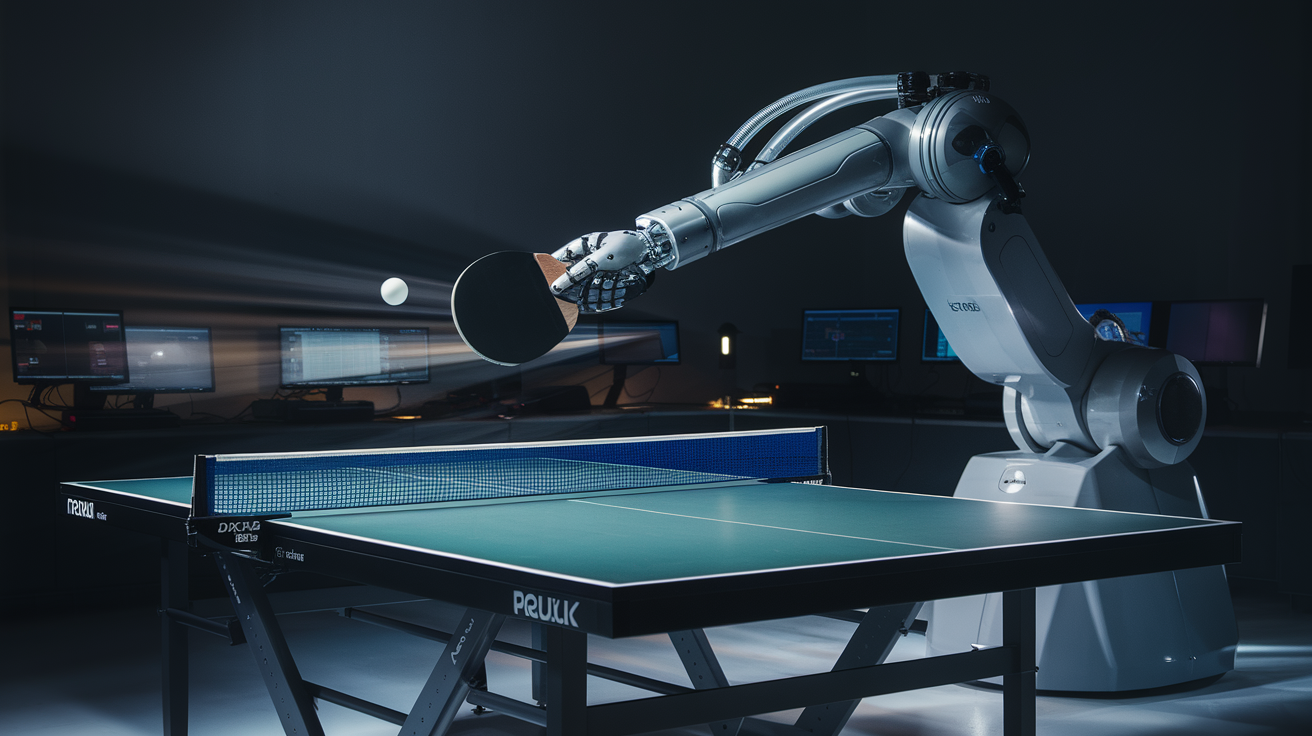

OpenAI's ChatGPT Images 2.0 ships with web-search integration and GPT Image 2. What's real, what's marketing, and why the architecture matters.

On Tuesday, April 21, 2026, OpenAI announced ChatGPT Images 2.0, the latest version of its AI-powered image generator. The product runs on a new underlying model called GPT Image 2 and introduces thinking capabilities gated to Plus, Pro, Business, and Enterprise subscribers. When a thinking model is selected, the image generator can pull information from the web to assist in generating multiple images from a single prompt.

The genuinely interesting architectural move here is retrieval-augmented generation applied to the image modality. Static prompt-to-image is a different product class than prompt-to-web-search-to-image. That distinction matters: connecting generation to live retrieval expands what the product can actually respond to. Whether the execution delivers is not visible from this article — what is visible is that the capability shipped to paying subscribers.

On the marketing layer: "sophisticated" and "thinking capabilities" are OpenAI's own quoted terms from the announcement. "Sophisticated" does zero descriptive work — label inflation, noted and moved past. "Thinking capabilities" does slightly more: it names an architectural feature rather than asserting quality. Still promotional framing, but it has a referent. Marginal pass; doesn't require further engagement beyond naming it.

On the broader pattern: this is the same rhythm running across all frontier labs — incremental capability stacking, tiered access, subscription monetization. No reason to distinguish OpenAI from the cohort here. A lab that cut Sora and launched this is cycling: dropping what isn't working, shipping what's next. That's a builder's rhythm, not a crisis pattern. One feature launch doesn't reshuffle the field.

On near-term harms: image generation with web retrieval will generate the usual discourse — deepfakes, misinformation, synthetic media at scale. That discourse locates the source correctly (people abuse the tool) but tends to misidentify the agent (blaming the model rather than the use). That pattern will surface in follow-on coverage; it isn't present in this article. Shipping is what counts. This shipped.

Deep Thought's Take

Web retrieval in image generation is a real capability step — not cosmetic. "Sophisticated" is label inflation; ignore it. What shipped is a different product class from static prompt-to-image. Builder rhythm, not a verdict.

Source: Original article